I'm still learning and building. This article is still in beta. I'm trying to optimize, enhance, refactor and more to work on.

YouTXT is an app that transcribes any YouTube videos and provides users with various features to do with the transcribed text.

Let's get started!

What we will be using?

Deepgram - It gives developers the tools they need to easily add AI speech recognition to their applications. We need API, so sign up here and get ~200 hours of free transcription.

OpenAI - It provides a range of artificial intelligence services, including machine learning, natural language processing, and computer vision. We will be using it only to extract the keywords in the video. Maybe we will use its power to level up the project later.

Streamlit - It allows you to quickly create and share beautiful, interactive data visualizations and applications in pure Python.

And we need some packages which we will see ahead.

Let's code it out!

We will try to build it piece by piece!

Extract audio from YT Video

Send the audio to Deepgram and get the transcription

Extract keywords using OpenAI

Translating the Transcript

Building UI with Streamlit

Extract audio from YT Video

For this process, we need youtube-dl package that allows us to download videos from youtube.com

pip install -U youtube-dl

Here's EMBEDDING YOUTUBE-DL documentation

We will get the YouTube video URL from the user and pass it to the function where the download takes place.

First let's extract the info of the video to be downloaded.

import youtube_dl

video_url = "https://youtu.be/kibx5BR6trA" # Any YT URL

videoinfo = youtube_dl.YoutubeDL().extract_info(url = video_url, download=False)

print(videoinfo)

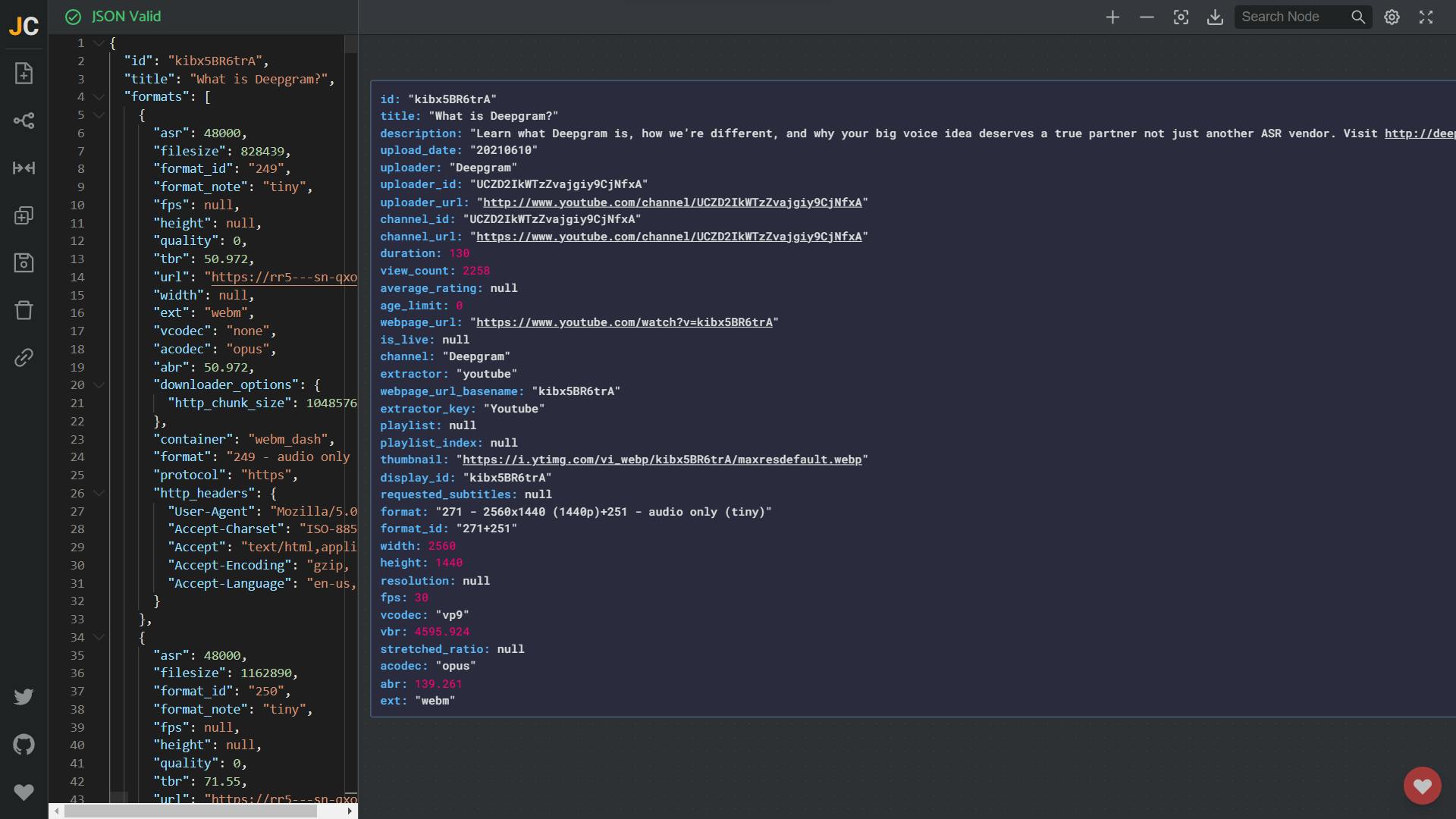

With the extract_info function we will get the response as follows:

It's quite large to visualize but anyway, all we need is the id and webpage_url. If you like to visualize the JSON output, check out jsoncrack.

We will create a file named based on their video ID.

videoinfo = youtube_dl.YoutubeDL().extract_info(url = video_url, download=False)

filename = f"{videoinfo['id']}.mp3"

options = {

'format': 'bestaudio/best',

'keepvideo': False,

'outtmpl': filename,

}

with youtube_dl.YoutubeDL(options) as ydl:

ydl.download([videoinfo['webpage_url']])

base = Path.cwd()

PATH_TO_FILE = f"{base}/{filename}"

Let's Transcribe

First, install Deepgram with pip

pip install deepgram-sdk

Next, we should import the Deepgram client in our application code:

from deepgram import Deepgram

For the initialization part, let's create a single instance of the deepgram client

dg_client = Deepgram(DEEPGRAM_API_KEY)

Now with the help of Deepgram's Official Python SDK, we will transcribe our downloaded file.

from deepgram import Deepgram

import asyncio, json

DEEPGRAM_API_KEY = 'YOUR_API_KEY'

PATH_TO_FILE = 'some/file.wav'

async def main():

# Initializes the Deepgram SDK

deepgram = Deepgram(DEEPGRAM_API_KEY)

# Open the audio file

with open(PATH_TO_FILE, 'rb') as audio:

source = {'buffer': audio, 'mimetype': 'audio/wav'}

response = await deepgram.transcription.prerecorded(source, {'summarize': True, 'punctuate': True, "diarize": True, "utterances": True })

# st.json(response, expanded=False)

response_result_json = json.dumps(response, indent=4)

asyncio.run(main())

The above code initializes the Deepgram SDK and then opens the audio file. It then transcribes the audio file and gives us the result in JSON format. We will process it to show the results.

Extract Keywords

This is an example from the OpenAI - Extract Keyword

import os

import openai

openai.api_key = os.getenv("OPENAI_API_KEY")

response = openai.Completion.create(

model="text-davinci-002",

prompt="enter the text here",

temperature=0.3,

max_tokens=60,

top_p=1.0,

frequency_penalty=0.8,

presence_penalty=0.0

)

Translate the Transcript

For this we need a package called itranslate which helps us to translate the transcript to different languages as selected by the user. As always install the package

pip install itranslate

To use the package, we need to import the package in our code. Below is the basic example of how to translate the text using itranslate. In the place of text, send in the transcription. For the to_lang param, we will use the st.selectbox which allows the user to choose which to which language needs to be converted.

from itranslate import itranslate as itrans

itrans("test this and that") # '测试这一点'

# new lines are preserved, tabs are not

itrans("test this \n\nand test that \t and so on")

# '测试这一点\n\n并测试这一点等等'

itrans("test this and that", to_lang="de") # 'Testen Sie das und das'

itrans("test this and that", to_lang="ja") # 'これとそれをテストします'

Building UI with streamlit

If you are new to streamlit, check out You can just turn data scripts into apps! to get started.

With the help of data that we have, we will build the UI using streamlit API. The tools are simple and self-explanatory. Check out the API Documentation

References/Docs

Project URLs

Created for Learn Build Teach Hackathon 2022